Why AI Requires Transparency in Financial Infrastructure: 11 Critical Strategic Justifications

This article is part of the broader Investment Infrastructure educational framework, explaining why AI requires transparency in financial infrastructure across eleven strategic justifications, from algorithm accountability and regulatory compliance to the Black Box Problem, Explainable AI standards, and the EU AI Act.

Educational Notice

This article is provided for informational and educational purposes only. It does not constitute legal, financial, or investment advice. Artificial intelligence technologies and financial infrastructure frameworks vary by jurisdiction and institutional design. Professional consultation should be sought before relying on any financial monitoring system.

Introduction

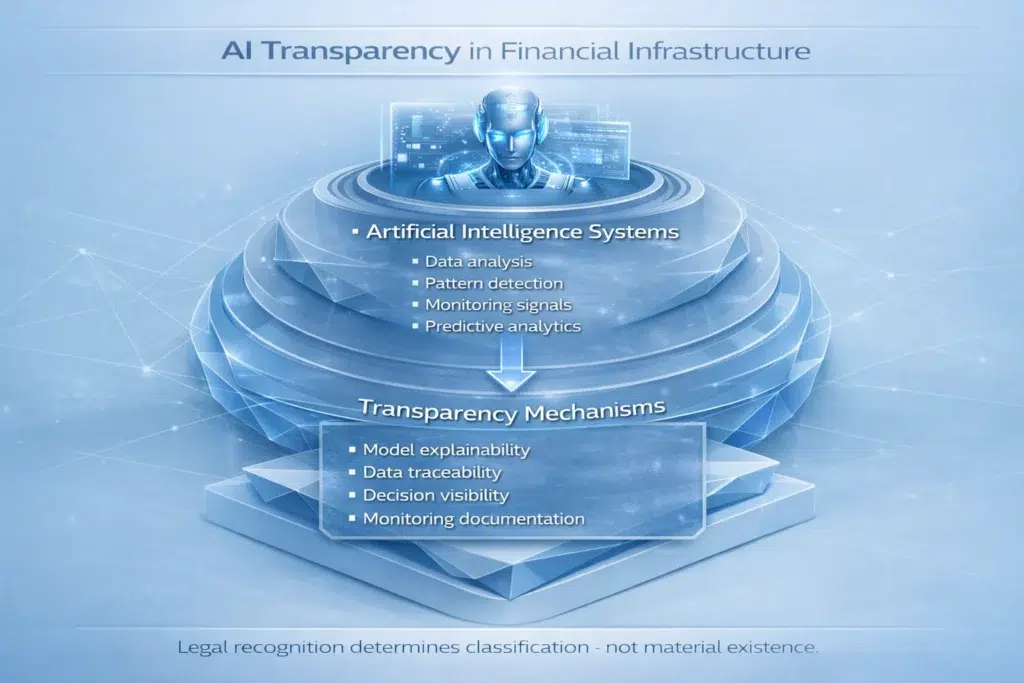

Understanding why AI requires transparency in financial infrastructure has become one of the most important governance questions in modern finance. Investment platforms, banking networks, digital asset ecosystems, and financial infrastructure providers are integrating AI-driven monitoring systems to analyze financial data, detect risks, and support operational oversight. As these systems expand, a critical question emerges: can institutions, regulators, and investors trust what they cannot see?

Artificial intelligence provides powerful analytical capabilities that allow financial systems to process large datasets and identify patterns across transaction activity, market behavior, and operational processes. But as AI systems influence monitoring frameworks and analytical decisions, transparency becomes essential. Without it, automated systems may create governance challenges, compliance risks, and operational uncertainty that undermine the very stability they are meant to support.

Think of it this way: AI is like an autopilot for a financial system. If the autopilot suddenly turns the plane left, the pilot (the bank, the regulator, the compliance officer) needs to know why. If the autopilot is a Black Box that does not explain its decisions, the pilot cannot trust it. Transparency is the dashboard that tells the pilot exactly what the AI is doing and why it is doing it. Without that dashboard, institutions are not using a tool. They are hoping one works. In finance, hope is not a strategy.

To understand the broader environment where these systems operate, see the Investment Infrastructure pillar. For a broader conceptual overview, see AI in Investment Infrastructure Explained.

The Bank for International Settlements (BIS), the IMF, and the OECD all analyze how artificial intelligence influences financial stability, governance frameworks, and regulatory oversight. Their consensus is consistent: transparency is not optional infrastructure for AI in finance. It is foundational.

In Simple Terms: Why AI Requires Transparency in Financial Infrastructure

Why AI requires transparency in financial infrastructure comes down to accountability. Artificial intelligence systems analyze financial data and generate analytical outputs that influence monitoring decisions. Transparency ensures that institutions understand how AI models interpret financial data, how risk monitoring signals are generated, how automated systems influence operational monitoring, and how infrastructure decisions are supported by AI analytics.

Without transparency, institutions may struggle to evaluate the reliability and governance of automated monitoring systems. Transparent AI helps ensure that artificial intelligence operates within accountable, auditable, and regulated financial environments. This is why AI requires transparency in financial infrastructure: not as a preference, but as a structural requirement of responsible deployment.

Solving the Black Box Problem in Finance with Explainable AI

The fundamental governance problem facing artificial intelligence in financial infrastructure is the Black Box Problem. Many traditional machine learning models are closed systems: financial data goes in, a risk signal or analytical output comes out. What happens in the middle, the logic, the weighting of factors, and the decision-making process, remains entirely hidden from view.

In modern financial systems, a Black Box approach is a critical failure point. It creates a complete absence of three things that financial governance requires absolutely. Accountability: if the model makes a wrong move that costs billions, no one can determine which factor caused the error, making it impossible to assign responsibility or prevent recurrence. Trust: regulators, compliance officers, and institution leadership cannot trust a system they fundamentally cannot understand. Auditability: independent reviewers cannot inspect the logic of an invisible model, which makes regulatory approval and ongoing oversight structurally impossible.

Real-world consequences of Black Box AI in finance are not theoretical. Algorithmic Flash Crashes (rapid, AI-driven market price collapses triggered by automated trading systems reacting to each other without human understanding of the cascade) have demonstrated how opaque automated systems can destabilize markets in minutes. Biased Credit Scoring systems have denied loans based on factors that, when later examined, reflected historical discrimination patterns embedded in training data that no human reviewer could see because the model was opaque.

The Financial Standard: Explainable AI (XAI)

To address this structural risk, institution-grade financial infrastructure must move away from standard Black Box models toward Explainable AI (XAI), often called White Box or Glass Box models. XAI is designed for the high-accountability world of finance through three defining characteristics. First, Transparency First: XAI provides a clear dashboard of its decision-making, showing which specific data points (such as a spike in trading volume or a drop in a tenant’s credit score) were the most influential factors. Second, the Why Feature: XAI does not just produce a signal. It explains the reasoning. Instead of outputting only “HIGH RISK,” an XAI system outputs “HIGH RISK because rent collections are down 15% and vacancy has doubled in the past 60 days.” Third, Audit-Ready: independent auditors and regulators can retrace the XAI model’s steps, inspect its parameters, and confirm it is operating within approved governance and legal limits.

The EU AI Act (the European Union’s landmark AI regulation, the world’s first comprehensive legal framework governing artificial intelligence) specifically classifies financial services as a High-Risk category that requires transparency, explainability, and human oversight for AI systems used in lending decisions, credit scoring, insurance, and financial monitoring. This regulatory reality makes why AI requires transparency in financial infrastructure not just a governance principle but a legal compliance requirement in the EU and an emerging standard globally. For regulatory context, see Why Compliance Matters in Tokenized Finance.

Understanding the difference between Black Box risk and White Box requirements is the single most important expert signal (E-E-A-T: Expertise, Experience, Authoritativeness, Trustworthiness) for building institutional-grade AI financial infrastructure. Without XAI, you are not using a tool. You are accepting an unknown risk.

Why Transparency Is Critical in AI Financial Systems

Financial infrastructure requires strong governance, reliable monitoring systems, and regulatory compliance frameworks. Artificial intelligence can support these functions by analyzing complex datasets and detecting patterns across financial activity. However, when automated systems analyze financial data and generate analytical outputs, institutions must ensure those systems remain transparent and accountable.

Why AI requires transparency in financial infrastructure helps institutions maintain trust, regulatory compliance, and operational oversight across digital financial environments. Transparency becomes especially important when AI systems interact with monitoring frameworks, governance processes, and compliance systems. A deeper discussion of structural limitations affecting AI systems can be found at Limitations of AI in Investment Infrastructure Explained.

Why AI Requires Transparency in Financial Infrastructure: 11 Strategic Justifications

The following sections explain eleven important reasons that illustrate why AI requires transparency in financial infrastructure, organized from foundational accountability through long-term institutional adoption.

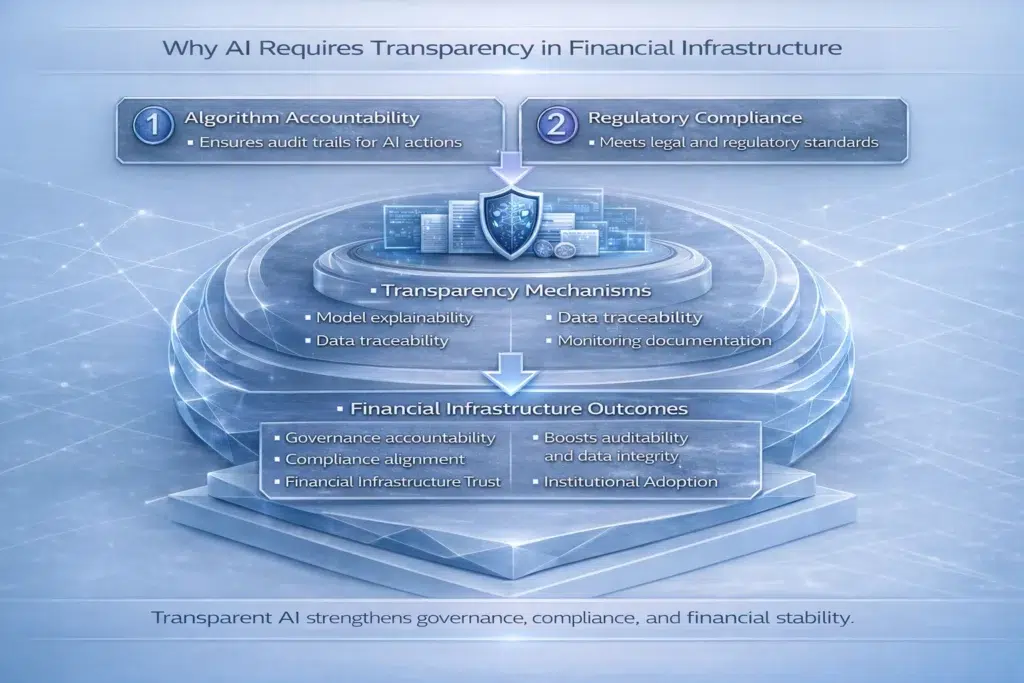

Justification 1: Algorithm Accountability

One of the most important reasons why AI requires transparency in financial infrastructure is algorithm accountability. Financial institutions must be able to explain how automated systems generate monitoring signals and analytical outputs. Transparent AI models, particularly XAI systems, allow institutions to trace how data is processed and how conclusions are reached. Accountability ensures that institutions remain responsible for the outcomes generated by automated systems. When a Black Box model causes harm, accountability is impossible. When a White Box model causes harm, the logic is visible, correctable, and attributable.

Justification 2: Regulatory Compliance

Financial regulators require institutions to demonstrate how monitoring systems operate and how risk signals are generated. Why AI requires transparency in financial infrastructure in the regulatory context is now increasingly codified in law. The EU AI Act classifies AI systems used in financial services as High-Risk, requiring documentation, transparency, human oversight, and explainability. Beyond the EU, regulators in the United States, UK, and Asia-Pacific are developing equivalent frameworks. Transparent AI models make it far easier for regulators to evaluate compliance frameworks within financial infrastructure. Opaque Black Box systems create inherent regulatory friction that can block institutional deployment entirely.

Justification 3: Risk Monitoring Reliability

Risk monitoring systems are essential components of financial infrastructure. Artificial intelligence can analyze large datasets to detect patterns associated with financial risk far faster than human analysts. However, institutions must verify that these monitoring systems operate reliably. Transparency allows risk management teams to validate the signals generated by AI monitoring systems, confirm that they reflect genuine risk factors rather than data artifacts or model errors, and intervene when the system produces anomalous outputs. A deeper explanation of AI monitoring functions is discussed in What Role Does AI Play in Risk Management Infrastructure? Why AI requires transparency in financial infrastructure in this context is that unreliable monitoring creates false confidence: institutions believe they are protected when they may not be.

Justification 4: Investor Protection

Financial infrastructure must protect investors by ensuring that monitoring systems operate fairly and consistently. Transparency allows institutions to evaluate whether AI systems produce unbiased analytical results. Biased Credit Scoring, algorithmic lending discrimination, and automated portfolio management systems that systematically disadvantage certain investor profiles are all failures rooted in opaque AI. Transparent monitoring frameworks support investor protection mechanisms by making bias detectable before it causes harm at scale. This is a key reason why AI requires transparency in financial infrastructure from a social and regulatory standpoint.

Justification 5: Model Auditability

Institutions must be able to audit artificial intelligence systems to evaluate their performance and reliability over time. Auditability allows independent reviewers to analyze how models operate, how training data was selected and validated, how data is processed during inference, and whether monitoring systems produce accurate and consistent outputs across different market conditions. XAI systems are designed to be Audit-Ready by definition. Black Box systems are not. Model auditability is therefore a central reason why AI requires transparency in financial infrastructure, because regulatory approval in most major jurisdictions now requires the ability to demonstrate model behavior to an external examiner.

Justification 6: Governance Oversight

Governance frameworks help ensure that financial infrastructure operates responsibly and in compliance with regulatory standards. AI systems must operate within governance frameworks that allow oversight teams to evaluate model behavior, monitoring results, and output quality continuously. Transparent AI models, particularly White Box systems, make it possible for governance teams to supervise automated systems without requiring deep technical expertise in machine learning. This democratizes governance oversight: compliance officers, risk committees, and board members can engage meaningfully with AI system performance when the logic is explainable. Opaque systems create governance dependency on the AI developers alone, which is itself a governance risk.

Justification 7: Bias Detection

Artificial intelligence models may unintentionally reflect biases present within training datasets. In financial systems, this can manifest as systematic disadvantage for certain borrower profiles, geographic areas, or asset classes, not because of explicit discrimination but because the historical data used to train the model embedded those patterns. Transparency allows institutions to identify and address algorithmic bias before it affects monitoring outcomes at scale. XAI systems can surface which features most strongly influence a decision, making bias visible and correctable. Bias detection is therefore another important reason why AI requires transparency in financial infrastructure: the consequences of undetected bias in lending, insurance, or investment monitoring can cause serious harm to individuals and expose institutions to significant regulatory liability.

Justification 8: Operational Risk Control

Operational risks arise when financial systems experience infrastructure failures, monitoring errors, or technical disruptions. Transparent AI systems allow institutions to evaluate monitoring outputs and detect anomalies within operational processes before they escalate. When an AI system begins producing outputs that deviate from expected parameters, a transparent model allows rapid diagnosis of the cause. A Black Box system offers no such diagnostic capability: institutions can see that something is wrong but cannot determine what or why. Transparency therefore directly reduces operational risk within financial infrastructure by enabling faster, more informed response to system anomalies.

Justification 9: Financial Infrastructure Trust

Financial systems depend on trust between institutions, investors, and regulators. Transparent AI systems strengthen trust because all stakeholders can understand how automated monitoring frameworks operate, what data they use, how they weight different factors, and what triggers their outputs. This trust-building function represents another important reason why AI requires transparency in financial infrastructure. Institutional adoption of AI is directly correlated with the ability of non-technical stakeholders to understand and feel confident in the system’s behavior. XAI is the mechanism through which AI earns institutional trust rather than demanding it on faith.

Justification 10: Integration With Blockchain Transparency

Artificial intelligence monitoring systems may operate alongside blockchain transparency frameworks. Blockchain transparency tools allow institutions to verify transaction records and financial activity across decentralized networks. AI and blockchain transparency are complementary: AI analyzes patterns across large datasets while blockchain provides verifiable, tamper-resistant records of the transactions being analyzed. When both systems are transparent, institutions have a complete picture: the data is verifiable and the analysis is explainable. Relevant resources include What Is On-Chain Transparency? and What Is Proof of Reserve in Blockchain Systems? These complementary technologies strengthen financial transparency across infrastructure systems.

Justification 11: Long-Term Institutional Adoption

Institutional investors and financial institutions often require transparent infrastructure before adopting emerging technologies at scale. This is why AI requires transparency in financial infrastructure at the market adoption level: Black Box systems face structural barriers to institutional deployment because they cannot satisfy the governance, regulatory, and fiduciary requirements that institutional adoption demands. XAI systems that provide explainable outputs, audit trails, and regulatory documentation are structurally positioned for long-term institutional adoption. Transparency is therefore not just a governance virtue but a commercial prerequisite for AI in institutional finance.

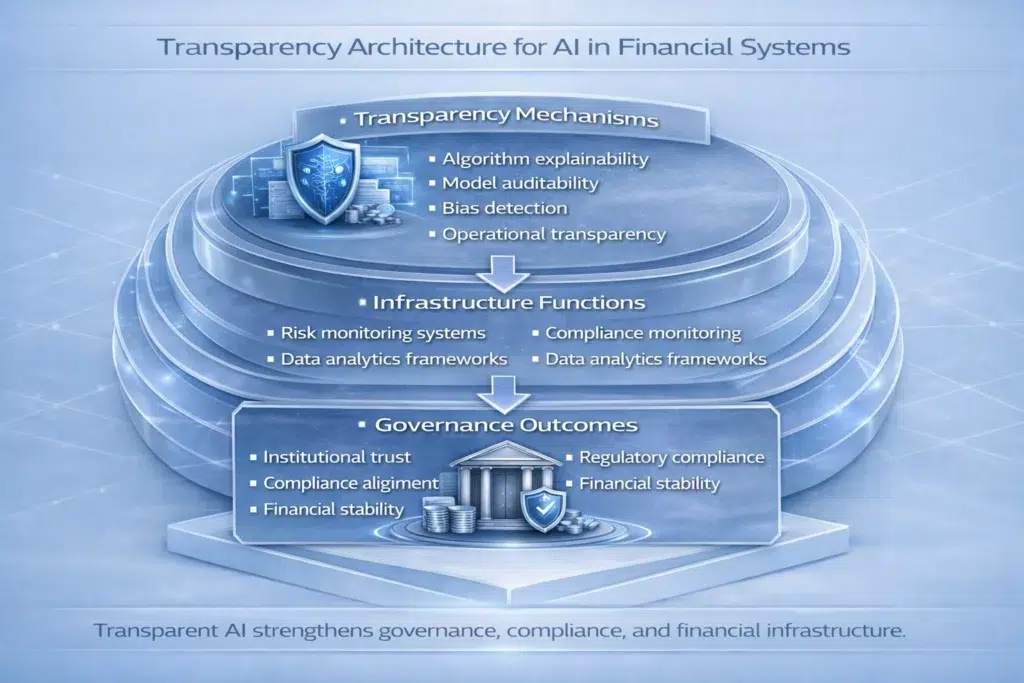

Architecture Snapshot: Transparency Benefits Across Infrastructure

The following table summarizes how each transparency dimension strengthens financial infrastructure when artificial intelligence systems support monitoring functions.

| Transparency Factor | Infrastructure Benefit | Governance Impact |

|---|---|---|

| Algorithm Accountability (XAI) | Clear, traceable decision logic | Responsibility can be assigned and corrected |

| Model Auditability | Independent evaluation of model behavior | Regulatory approval and ongoing compliance |

| Bias Detection | Fair and consistent monitoring outputs | Investor protection and regulatory alignment |

| Operational Transparency | Reliable risk monitoring with rapid diagnosis | Systemic stability and anomaly response |

| Regulatory Transparency (EU AI Act) | Compliance documentation and explainability | Institutional trust and long-term adoption |

Institutional Perspective

Financial institutions evaluating artificial intelligence technologies must consider transparency as a key governance factor, not an optional feature. Evaluation criteria increasingly include explainability of AI models (can non-technical stakeholders understand the outputs?), governance oversight mechanisms (can compliance officers and risk committees monitor the system?), auditability of analytical systems (can external reviewers inspect model behavior?), regulatory compliance integration (does the system meet EU AI Act High-Risk requirements or equivalent frameworks?), and reliability and documentation of training datasets.

The BIS, IMF, and OECD all analyze how artificial intelligence influences financial stability, governance frameworks, and regulatory oversight. Their research consistently supports the conclusion that why AI requires transparency in financial infrastructure is inseparable from the broader question of how AI can be responsibly integrated into systemically important financial systems.

Frequently Asked Questions

Why does AI require transparency in financial infrastructure?

Why AI requires transparency in financial infrastructure is that transparent systems allow institutions to evaluate how automated monitoring tools generate analytical outputs, detect bias, assign accountability, satisfy regulatory requirements, and maintain governance oversight. Without transparency, institutions cannot validate reliability, regulators cannot approve deployment, and investors cannot be protected from opaque automated decisions. The Black Box Problem makes all of these governance functions impossible.

What is Explainable AI (XAI) in financial systems?

Explainable AI (XAI), also called White Box or Glass Box AI, refers to artificial intelligence models whose analytical processes can be understood and interpreted by humans without specialized machine learning expertise. XAI provides a clear decision dashboard showing which data factors drove each output, explains the Why behind each signal rather than just the What, and is designed to be Audit-Ready for regulatory review. XAI is the institutional standard for why AI requires transparency in financial infrastructure because it directly solves the Black Box Problem.

What is the EU AI Act and why does it matter for AI in finance?

The EU AI Act is the world’s first comprehensive legal framework governing artificial intelligence. It classifies AI systems used in financial services, including credit scoring, lending decisions, insurance, and financial monitoring, as High-Risk applications. High-Risk AI systems must meet mandatory transparency, explainability, human oversight, and documentation requirements before deployment. This makes why AI requires transparency in financial infrastructure a legal compliance requirement in the EU, not just a governance best practice.

How do regulators evaluate AI systems in finance?

Regulators evaluate transparency, governance frameworks, monitoring reliability, bias documentation, and audit trails when assessing artificial intelligence technologies within financial infrastructure. The ability to demonstrate XAI-level explainability is increasingly a prerequisite for regulatory approval in major jurisdictions. Black Box systems that cannot provide this documentation face significant barriers to regulatory acceptance.

Can blockchain transparency improve AI monitoring?

Yes. Blockchain transparency systems provide verifiable, tamper-resistant transaction records that complement AI monitoring tools. When blockchain records the data that AI analyzes, and XAI explains how the AI analyzed it, institutions achieve end-to-end transparency. This combination represents the standard increasingly expected by institutional-grade financial infrastructure. For context, see What Is On-Chain Transparency? and What Is Proof of Reserve in Blockchain Systems?

Does AI replace governance oversight in financial systems?

No. Artificial intelligence supports monitoring processes but must operate alongside governance frameworks and regulatory oversight, not instead of them. Why AI requires transparency in financial infrastructure is precisely because AI amplifies human decision-making capacity rather than replacing human accountability. XAI is designed to keep humans meaningfully informed and in control, even as AI systems process data at scales no human team could match alone.

Conclusion

Understanding why AI requires transparency in financial infrastructure is essential for responsible adoption of artificial intelligence within financial systems. Artificial intelligence can strengthen financial monitoring capabilities by analyzing large datasets and detecting complex patterns across financial activity. But institutions must ensure these systems operate within transparent governance frameworks that allow explainability, auditability, bias detection, and regulatory compliance.

The Black Box Problem is not a technical inconvenience. It is a fundamental governance failure that creates accountability gaps, trust deficits, and regulatory barriers. The solution, Explainable AI (XAI), represents the institutional standard that responsible AI deployment in finance must meet. The EU AI Act’s High-Risk classification of financial services AI formalizes this requirement in law. When transparency mechanisms through XAI are combined with governance oversight, regulatory compliance frameworks, and blockchain verification tools, artificial intelligence can operate responsibly within modern financial infrastructure.

Why AI requires transparency in financial infrastructure is increasingly important in tokenized investment infrastructure, where artificial intelligence systems interact with blockchain verification mechanisms and automated governance models.

Sources and Regulatory References

- Bank for International Settlements (BIS): https://www.bis.org

- International Monetary Fund (IMF): https://www.imf.org

- Organisation for Economic Co-operation and Development (OECD): https://www.oecd.org

- EU AI Act (Official Text): https://eur-lex.europa.eu

Educational Disclaimer

This article is provided for educational purposes only and does not constitute legal, financial, or investment advice. Artificial intelligence technologies and financial infrastructure frameworks vary by jurisdiction and institutional design. Professional consultation should be sought before relying on any financial monitoring system.

Last updated: March 2026

Explore AI in Investment Infrastructure

- AI in Investment Infrastructure Explained

- How AI Is Used in Investment Infrastructure

- What Role Does AI Play in Risk Management Infrastructure?

- Limitations of AI in Investment Infrastructure Explained

- AI vs Rule-Based Systems in Investment Platforms

- On-Chain Transparency Explained (cross-pillar)

- Transparency Reduces Risk in Tokenized Assets (cross-pillar)

- On-Chain vs Off-Chain Transparency (cross-pillar)

- Why Compliance Matters in Tokenized Finance (cross-pillar)

- Investment Infrastructure Hub