Limitations of AI in Investment Infrastructure Explained: 13 Significant Structural Constraints

This article is part of the broader Investment Infrastructure educational framework, examining the limitations of AI in investment infrastructure across thirteen structural constraints that every institution must evaluate before deploying artificial intelligence within financial systems.

Educational Notice

This article is provided for informational and educational purposes only. It does not constitute legal, financial, or investment advice. Artificial intelligence technologies and financial infrastructure frameworks vary by jurisdiction and institutional design. Professional consultation should be sought before relying on any automated financial monitoring system.

Introduction

Here is a useful way to think about AI in financial infrastructure. Imagine a very diligent librarian who has read every book in the library. Every transaction record. Every market report. Every historical dataset the institution has ever produced. That librarian is fast, consistent, and never gets tired. But the librarian has some important constraints that matter enormously before you hand over major decisions.

If the books contain errors, the librarian’s answers will be wrong. If a question requires knowledge of a book that hasn’t been written yet, the librarian cannot help. And occasionally, when pressed for an answer the librarian doesn’t quite know, they may confidently invent one. You still need a Library Director, a human expert, to check the librarian’s work before acting on it at scale.

Understanding the limitations of AI in investment infrastructure is essential as financial systems increasingly adopt artificial intelligence for monitoring, analytics, and operational decision environments. AI allows investment platforms and financial institutions to analyze large datasets, detect patterns, and support risk monitoring across complex financial systems. But powerful tools require honest evaluation. The limitations of AI in investment infrastructure are not reasons to avoid the technology. They are the framework for deploying it responsibly.

This article examines thirteen structural constraints that illustrate the limitations of AI in investment infrastructure. For the broader infrastructure environment where these systems operate, see the Investment Infrastructure pillar. For how AI is actively used despite these constraints, see How AI Is Used in Investment Infrastructure.

The Bank for International Settlements (BIS), the IMF, and the OECD all study how artificial intelligence affects financial stability and regulatory oversight. Their consistent finding is that AI capabilities and AI limitations must be evaluated together for responsible deployment.

In Simple Terms: The Limitations of AI in Investment Infrastructure

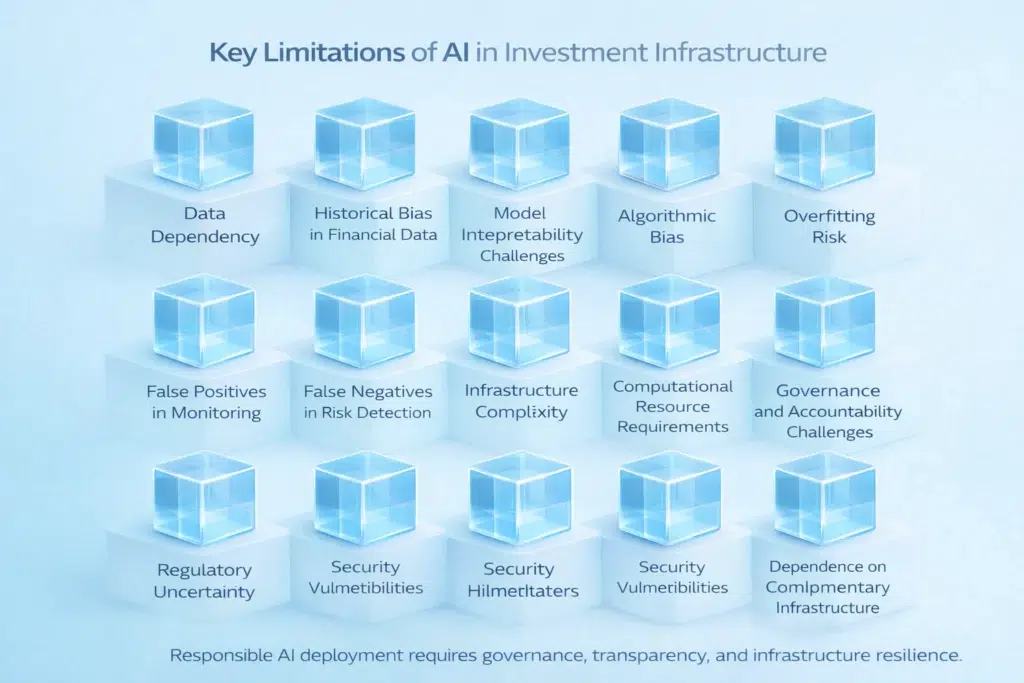

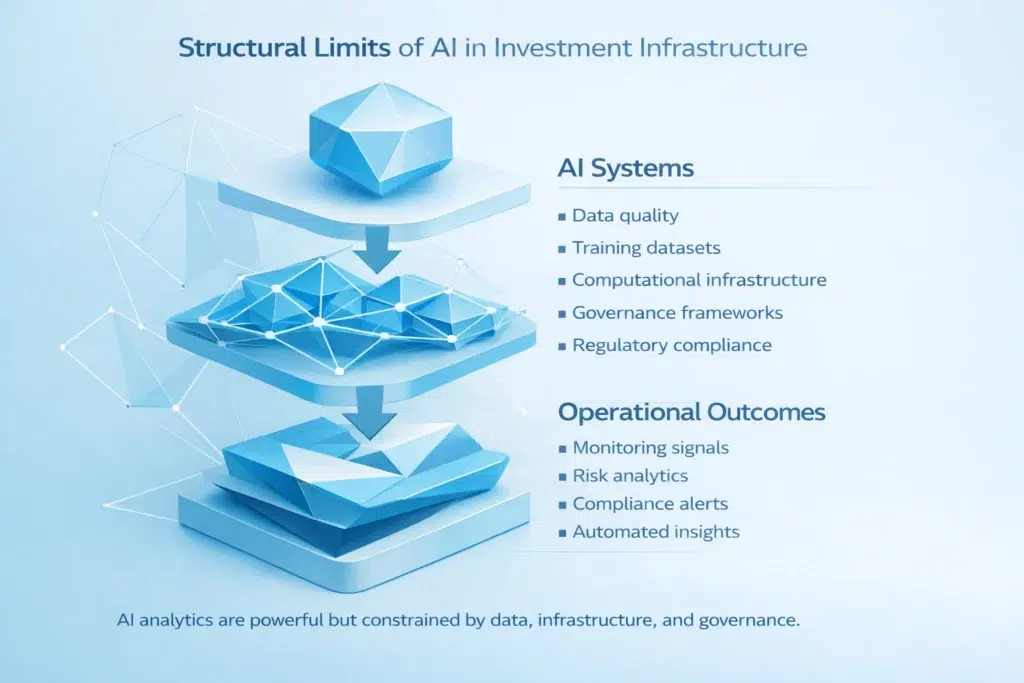

The limitations of AI in investment infrastructure arise because artificial intelligence systems rely on data quality, computational infrastructure, and governance oversight. AI can analyze large datasets and detect patterns across financial activity faster than any human team. But structural constraints remain regardless of how powerful the system is.

Think of it as the librarian problem. The librarian (AI) knows everything in the library (the training data). But if the books are wrong, the librarian is wrong. If the market changes in a way no book has captured yet, the librarian has no answer. And sometimes the librarian will confidently state a fact that was never in any book at all, because the system was designed to produce answers, not admissions of uncertainty. You still need the Library Director (the human) to validate the work before major decisions are made.

Some of the most critical limitations of AI in investment infrastructure include reliance on historical training data, lack of transparency in complex algorithms, risk of biased or fabricated outputs, infrastructure complexity, and governance and regulatory oversight requirements. Understanding these constraints helps institutions implement AI systems responsibly.

Why Understanding the Limitations of AI in Investment Infrastructure Matters

Financial infrastructure supports investment systems, digital asset markets, and banking environments that process enormous volumes of capital and financial activity. Because these systems operate within regulated financial environments, institutions cannot simply deploy AI and hope for the best. They must evaluate what can go wrong just as carefully as what can go right.

Recognizing the limitations of AI in investment infrastructure allows financial institutions to design infrastructure that combines AI analytics with governance frameworks and transparency mechanisms. AI often operates alongside rule-based monitoring systems (automated systems that follow fixed, pre-written rules rather than learning from data). The structural comparison between these approaches is explained in AI vs Rule-Based Systems in Investment Platforms.

13 Structural Constraints: The Limitations of AI in Investment Infrastructure

The following sections examine thirteen key constraints that illustrate the limitations of AI in investment infrastructure.

Constraint 1: Data Dependency

Artificial intelligence models rely heavily on training datasets (the historical information used to teach the model what patterns to recognize and what outputs to produce). If the datasets are incomplete, inaccurate, or structurally biased, the output the AI system generates will reflect those flaws. This is the foundational limitation: the librarian can only answer well if the books are accurate. Data dependency represents one of the most significant limitations of AI in investment infrastructure because institutions often cannot fully control the quality of the data that flows into complex models at scale.

Constraint 2: Historical Bias and Model Drift

Many AI models rely on historical financial datasets to identify patterns. This creates two related problems. The first is straightforward: historical patterns may not accurately represent future market conditions. The second is more subtle and often underappreciated. It is called Model Drift (also called Concept Drift or Model Decay: the gradual degradation of an AI model’s accuracy as the real-world conditions it was trained on change over time). An AI system trained during a prolonged Bull Market (a period of rising asset prices and strong economic growth) will have learned rules that simply do not apply during a Bear Market (a period of falling prices and economic contraction). The model’s internal logic becomes increasingly disconnected from reality, but without active monitoring, the system continues producing outputs as if nothing has changed. Historical bias and model drift together make these limitations of AI in investment infrastructure particularly dangerous in volatile market environments.

Constraint 3: Model Interpretability Challenges

Some AI models operate using complex statistical architectures, particularly deep learning systems (AI models that process information through many layered computations, inspired loosely by how neurons in the brain connect), that produce accurate outputs but cannot explain how they reached those outputs. When institutions cannot clearly trace how an AI model generated a specific result, governance challenges arise immediately. Regulators cannot approve what they cannot examine. Compliance officers cannot validate what they cannot understand. This is precisely the Black Box Problem discussed in depth in Why AI Requires Transparency in Financial Infrastructure. Model interpretability challenges represent a major governance-related limitation of AI in investment infrastructure.

Constraint 4: AI Hallucinations

This constraint deserves specific attention because it is both widely misunderstood and potentially catastrophic in financial contexts. AI Hallucinations (a term describing the tendency of certain AI models, particularly Large Language Models, to confidently generate plausible-sounding but entirely fabricated information when they lack sufficient training data to produce a real answer) are not a minor inconvenience in financial infrastructure. They are a structural failure point.

In finance, a hallucination is not a funny mistake. It is a data fabrication that could lead to incorrect valuations, flawed risk assessments, or trade decisions based on information that does not exist. An AI model that reports a regulatory approval that never happened, or a financial metric that was never published, has not made a guess. It has created a false record. This makes AI hallucinations one of the most critical limitations of AI in investment infrastructure for any system involved in compliance, valuation, or reporting functions.

Constraint 5: Algorithmic Bias

Artificial intelligence models may unintentionally replicate biases present in their training datasets. If the data used to train the model reflects historical patterns of discrimination (for example, lending decisions that systematically disadvantaged certain geographic areas or demographic groups), the AI system will learn those patterns as normal and reproduce them in its outputs. Algorithmic bias is invisible without transparency mechanisms and Explainable AI (XAI) (AI systems designed to show which factors drove each decision, making bias detectable and correctable). Without these tools, institutions may deploy systems that are technically performing well statistically while systematically producing unfair outcomes. This makes algorithmic bias a significant limitation of AI in investment infrastructure from both an ethical and regulatory compliance perspective.

Constraint 6: Overfitting Risk

Overfitting (a technical condition where an AI model becomes so precisely calibrated to its historical training data that it loses the ability to generalize to new situations) is a well-known limitation of AI in investment infrastructure. A model that has been overfitted will perform extremely well in controlled testing environments using historical data but produce unreliable results when it encounters real-time data that differs from its training set. Financial markets are inherently non-stationary: conditions change, correlations shift, and edge cases occur. An overfitted model is, paradoxically, less useful the better it appears to perform on historical benchmarks.

Constraint 7: False Positives in Monitoring Systems

AI monitoring systems may generate False Positives (alerts that signal a risk event or compliance concern that does not actually exist). In financial infrastructure, false positives create real operational costs: compliance teams must investigate each alert, trading desks may pause activity unnecessarily, and risk management resources are diverted from genuine concerns. At scale, a system generating significant false positive rates can create Alert Fatigue (a condition where teams begin to ignore alerts because too many have proven unfounded), which paradoxically makes the institution more vulnerable to genuine risks buried in noise. False positives represent an important operational limitation of AI in investment infrastructure.

Constraint 8: False Negatives in Risk Detection

The inverse problem is False Negatives (cases where AI monitoring systems fail to detect genuine risk events). While false positives are operationally expensive, false negatives are potentially catastrophic. Missing a genuine AML (Anti-Money Laundering: the regulatory requirement to detect and prevent financial crimes including money laundering and terrorist financing) signal, a systemic risk indicator, or a fraud pattern can expose an institution to regulatory sanctions, financial losses, and reputational damage. False negatives represent another significant limitation of AI in investment infrastructure because the system appears to be working normally while silently failing at its core function.

Constraint 9: Infrastructure Complexity

Deploying AI in financial infrastructure requires multiple complex components to function simultaneously and reliably: data pipelines (automated systems that collect, clean, and route data to the AI model), training environments (dedicated computational infrastructure for building and updating models), real-time inference systems (the infrastructure that applies the trained model to live data), and monitoring systems (tools that track model performance and detect degradation). Each component can fail independently. Managing the interdependencies between them is an ongoing operational challenge. This technical complexity represents a meaningful limitation of AI in investment infrastructure, particularly for smaller institutions that may lack the engineering capacity to maintain all layers simultaneously.

Constraint 10: Computational Resource Requirements

AI systems require significant computational power for training models and analyzing large datasets in real time. These resource requirements translate directly into infrastructure costs (cloud computing costs, hardware investment, energy consumption) and operational complexity. Training a large AI model may require thousands of computing hours and substantial energy expenditure. These costs represent a practical limitation of AI in investment infrastructure, particularly for applications requiring continuous retraining as market conditions evolve.

Constraint 11: Governance and Accountability Challenges

Financial institutions must establish governance frameworks (documented systems defining who is responsible for decisions, how outcomes are monitored, and what remediation procedures apply when systems fail) for every automated system they deploy. For AI, this is particularly challenging because the outputs may be difficult to explain, the training process may not be fully transparent, and the accountability chain between a model’s decision and a human responsible for it may be unclear. AI monitoring systems must include oversight mechanisms, model validation procedures, and audit frameworks. Governance complexity represents one of the most underappreciated limitations of AI in investment infrastructure because it is an organizational and legal challenge as much as a technical one.

Constraint 12: Regulatory Uncertainty

Regulators in most jurisdictions are still developing frameworks for evaluating artificial intelligence in financial infrastructure. The EU AI Act (the world’s first comprehensive AI regulation) classifies financial services AI as High-Risk, requiring specific transparency, oversight, and documentation standards. But outside the EU, regulatory clarity varies significantly. Institutions must evaluate compliance risks carefully when deploying AI monitoring systems, particularly for functions that directly influence lending decisions, credit scoring, or investment recommendations. Regulatory uncertainty represents a constraint on the limitations of AI in investment infrastructure because it creates legal ambiguity about what responsible deployment actually looks like in different jurisdictions.

Constraint 13: Security Vulnerabilities

Artificial intelligence systems may become targets for cyberattacks or intentional manipulation. Three specific attack types are particularly relevant to financial infrastructure. Data Poisoning Attacks (deliberately corrupting the training data that the model learns from, causing it to produce systematically flawed outputs after retraining). Adversarial Inputs (specially crafted data inputs designed to fool the model into producing incorrect classifications or outputs). Model Manipulation (direct interference with the model’s parameters or decision logic). These security risks represent an emerging but serious dimension of the limitations of AI in investment infrastructure that traditional cybersecurity frameworks were not designed to address. For complementary transparency and verification tools that help mitigate some of these risks, see On-Chain Transparency Explained and What Is Proof of Reserve in Blockchain Systems?

Architecture Snapshot: Constraint Risk Summary

| Constraint | Infrastructure Risk | Operational Impact |

|---|---|---|

| Data Dependency | Analytical error from poor training data | Monitoring accuracy undermined at source |

| Model Drift | Gradual model distortion as markets evolve | Decision reliability degrades silently over time |

| AI Hallucinations | Data fabrication in outputs | Risk of illegal trades or incorrect valuations |

| Overfitting | Model too calibrated to historical data | Forecast reliability collapses on new data |

| False Positives | Operational alert noise | Alert fatigue and wasted compliance resources |

| False Negatives | Missed genuine risk signals | Regulatory exposure and financial loss |

| Security Vulnerabilities | Data poisoning and adversarial attacks | System integrity and institutional protection at risk |

Mitigating Limitations with Human-in-the-Loop Governance

Listing thirteen constraints without discussing how to address them would be incomplete. The most widely adopted institutional solution to the limitations of AI in investment infrastructure is HITL (Human-in-the-Loop governance: a system design where AI provides analytical outputs but humans retain decision-making authority and oversight at defined checkpoints, rather than allowing automated systems to act independently).

Think of it as the difference between autopilot and a self-flying plane with no cockpit. Autopilot handles routine navigation efficiently while the pilot monitors systems and retains control at critical moments. A self-flying plane with no cockpit oversight is a different proposition entirely, and not one any regulatory authority currently endorses for commercial aviation or financial infrastructure.

In practical terms, HITL governance means that AI flags risks but humans confirm action. AI surfaces patterns but humans evaluate context. AI generates valuations but humans validate methodology. This Co-pilot model captures the efficiency benefits of AI (processing speed, pattern recognition at scale, 24/7 monitoring) while preserving human accountability for decisions that carry regulatory, legal, or fiduciary weight. The limitations of AI in investment infrastructure are significantly mitigated when HITL governance is properly designed and consistently enforced.

Institutional Perspective

Financial institutions evaluating artificial intelligence technologies must consider both the capabilities and the limitations of AI in investment infrastructure as equally important inputs to deployment decisions. Important evaluation criteria include transparency of algorithms (can the model explain its outputs?), governance frameworks (who is accountable for what?), regulatory compliance integration (does the system meet applicable regulatory standards including the EU AI Act High-Risk requirements?), reliability and documentation of training datasets, infrastructure resilience (what happens when components fail?), and active model drift monitoring protocols.

The BIS, IMF, and OECD analyze how artificial intelligence affects financial stability and regulatory oversight. Their research consistently supports a balanced view: the limitations of AI in investment infrastructure are manageable when institutions deploy AI with appropriate governance, transparency tools, and human oversight rather than treating automated outputs as self-validating.

Frequently Asked Questions

What are the limitations of AI in investment infrastructure?

The limitations of AI in investment infrastructure include data dependency, historical bias and model drift, AI hallucinations (fabricated outputs), algorithmic bias, overfitting risk, false positives, false negatives, infrastructure complexity, computational resource requirements, governance and accountability challenges, regulatory uncertainty, security vulnerabilities, and dependence on complementary infrastructure. Each constraint requires specific governance and monitoring mechanisms to manage responsibly.

What are AI hallucinations and why do they matter in finance?

AI hallucinations are instances where an AI model confidently generates plausible-sounding but entirely fabricated information. In financial infrastructure, a fabricated regulatory approval, an invented financial metric, or a non-existent market event reported as fact by an AI system can drive trade decisions, valuations, or compliance reports based on information that does not exist. This makes hallucinations one of the most serious limitations of AI in investment infrastructure for any system involved in reporting or decision support.

What is Model Drift and why does it matter?

Model Drift (also called Concept Drift or Model Decay) is the gradual degradation of an AI model’s accuracy as real-world conditions change away from the conditions captured in its training data. An AI trained on Bull Market data develops rules suited to rising markets. When a Bear Market arrives, those rules become unreliable without the system flagging its own degradation. Active model performance monitoring and scheduled retraining are the standard institutional responses to this limitation of AI in investment infrastructure.

What is Human-in-the-Loop (HITL) governance?

HITL governance (Human-in-the-Loop: a system design where AI provides analytical outputs but humans retain decision authority at defined checkpoints) is the most widely adopted institutional approach to managing the limitations of AI in investment infrastructure. AI flags, surfaces, and analyzes. Humans validate, decide, and bear accountability for outcomes. This Co-pilot model captures AI efficiency gains while preserving the human oversight that financial regulation requires.

Can AI monitoring systems produce incorrect results?

Yes. Artificial intelligence monitoring systems may produce false positives (alerts for non-existent risks), false negatives (missed genuine risks), and hallucinations (fabricated outputs). These represent key limitations of AI in investment infrastructure that HITL governance, model validation, and transparency mechanisms are designed to detect and manage.

Why do regulators examine AI monitoring systems?

Regulators evaluate artificial intelligence systems to ensure accountability, transparency, and financial system stability. The EU AI Act specifically classifies AI used in financial services as High-Risk, requiring mandatory documentation, explainability, and human oversight. Regulatory examination of AI is a direct response to the limitations of AI in investment infrastructure, particularly around interpretability, bias, and accountability gaps.

Conclusion

Understanding the limitations of AI in investment infrastructure is essential for responsible deployment of artificial intelligence within financial systems. AI provides genuinely powerful analytical capabilities: processing speed no human team can match, pattern recognition across datasets too large for manual review, and 24/7 monitoring consistency. None of that disappears when you acknowledge the constraints.

But the Library Director still needs to check the librarian’s work. Data dependency, model drift, AI hallucinations, algorithmic bias, overfitting, false signals, infrastructure complexity, governance gaps, regulatory uncertainty, and security vulnerabilities are all real constraints that require real governance responses. By combining AI with Human-in-the-Loop governance frameworks, Explainable AI transparency tools, and active model monitoring, financial institutions can deploy AI technologies responsibly within modern investment infrastructure. The limitations of AI in investment infrastructure are not obstacles to adoption. They are the blueprint for adoption done correctly.

Sources and Regulatory References

- Bank for International Settlements (BIS): https://www.bis.org

- International Monetary Fund (IMF): https://www.imf.org

- Organisation for Economic Co-operation and Development (OECD): https://www.oecd.org

Educational Disclaimer

This article is provided for educational purposes only and does not constitute legal, financial, or investment advice. Artificial intelligence technologies and financial infrastructure frameworks vary by jurisdiction and institutional design. Professional consultation should be sought before relying on any automated financial monitoring system.

Last updated: March 2026

Explore AI in Investment Infrastructure

- AI in Investment Infrastructure Explained

- How AI Is Used in Investment Infrastructure

- What Role Does AI Play in Risk Management Infrastructure?

- Why AI Requires Transparency in Financial Infrastructure

- AI vs Rule-Based Systems in Investment Platforms

- On-Chain Transparency Explained (cross-pillar)

- Transparency Reduces Risk in Tokenized Assets (cross-pillar)

- Why Compliance Matters in Tokenized Finance (cross-pillar)

- How Regulation Improves Transparency in Tokenized Finance (cross-pillar)

- Investment Infrastructure Hub